Sometimes I feel not needed anymore. I admit, I love business process testing. I enjoy the art of picking the right business process to test. I firmly believe in the power of enhancing requirements by using a system view. Maybe even using a “systems thinking” view – although I am not that firm yet in this method. But in the end, we get business processes to be verified. And this is, what I call end-to-end (e2e) tests.

But then I read all those articles about unit testing, testing in the agile team, …. The e2e test does not deem to be needed anymore. Even more, end-to-end test is considered as being harmful (By Steve Smith, Always Agile Consulting). But why?

I guess the main idea why Steve dislikes e2e tests is, that they tend to be inflexible, expensive, slow, complex and serving as a fig-leaf for little coverage in different test levels. They often take manual preparation so they are no good for continuous integration. In the Google Testing Blog, Mike Wacker describes that a test strategy build around e2e tests will fail: it numbs the developer’s understanding of what needs to be tested. It gives the tester a false feeling that he finally tested “what is really relevant” – as it is so close to the real world. And the main problem is that feedback is late and hinders bug root cause analysis.

Whew! Now I feel better. These two opinions to me only show the risks of misusing e2e testing. And I have seen those happen. But I still see the benefit. You have to plan it carefully and use it, where it gives more benefit than costs.

Shift left

Sure, we need to identify bugs early. Best would be not to have them at all to have their number reduced from the beginning.

So at time of requirements analysis and design (no matter which software development model you use) you get:

- Some technical architecture of the solution that could be very fragmented: How will the module

s of the solution be connected, and what interfaces do they have. Will you use external software or services?

s of the solution be connected, and what interfaces do they have. Will you use external software or services? - Some business architecture of the system potentially also following inherent processes.

At time of technical design, you define the modules and their interfaces, trying to cut into modules with few, well defined interfaces. The same happens with these modules again into units. The main point is: you decompose the features in the end in to software modules with interfaces, own code and used code from somewhere else.

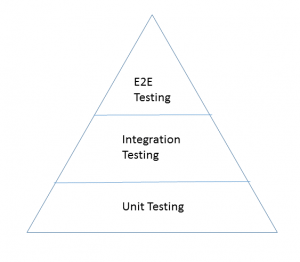

The standard test design approach would be to identify for each requirement, at which level it can be tested earliest, ideally resulting into a testing pyramid with very few e2e tests, so that the requirements can be tested as early and as exactly as possible. The e2e tests this way just give another level of integration and should only be used to test the specifics of that very level.

For applications with inherent business processes, it might be a good idea to design and build a process testing engine. The idea would then be to test the processes before they are implemented, just using their interface definitions and mocks.

Test Automation and Dev Ops

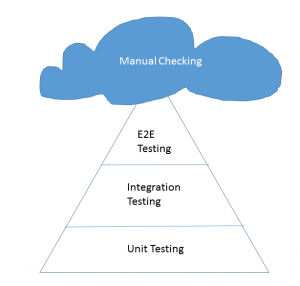

We all know that test automation is not always that easy to achieve. Maybe it is easy on the unit level, but the more integration takes place, the more setup of test environment is needed. And the less flexible the automation gets, and the more difficult it is to repeat the test exactly as it was before. You would not really want to reset several databases… However you still might want to include SOME e2e test into the automated testing chain, for example those verifying vital application logic, as described by Bas Dijkstra. Also I guess that even in a very mature Dev Ops like environment, you would not be in the position to automate everything, so I believe it is normal to end up at some kind of “testing” volcano, where there are remaining tests that require manual checking. Maybe one question to the readers: do you really 100% rely on your automated tests while doing Dev Ops?

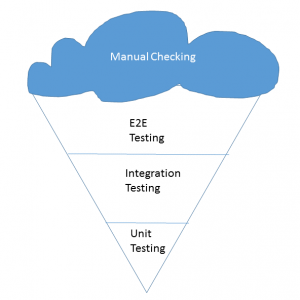

The Ice-Cream Cone

Having laid out all of this nice theory, let us take a look at the reality. For some reason, our volcano ends up cooling down a lot and ends as the famous software testing ice-cream cone (by Alister Scott on watirmelon.blog), many of us have experienced before. Why is that so?

- Maybe the organization was not mature enough to implement a proper pyramid

- Maybe the organization did not invest in refactoring tests

- Maybe the system has been existing since long, and the structure of the available tests reflects th

e ice-cream cone

e ice-cream cone - Maybe the testers did not have such a technical attitude and it was easier for them to talk in business level language

- Maybe, those processes that really do need process interaction are really important and restricted time has given those a priority

- Maybe the organizational setup was not in an atmosphere of trust, for example as a service provider did deliver code + lower level tests

While I am not saying that all these potential reasons are to be excused and resolved via E2E tests I think we need to get acquainted with such situations in order to sort them out.

I will try to do that in more posts, one of them giving an example on how a test plan using business process test at the correct point in time has been set up in a real life example. Another one will focus on the question which requirements are candidates for an e2e test. But as I said above. I love this topic, and there is more to come.

You are absolutely right. Great article! And fantastic name of the website by the way.

Pingback: A Real Life Example for e2e Testing – Testhexen

Pingback: “Therefore test, who wants to bind himself forever” – Testhexen